I do not know how to re-produce proofs in Analysis exams, but in this post I will try to know why we study Analysis. Most of us believe that Analysis is same as rigorous Calculus. Also, what makes Mathematics different from Physics is the “rigour”. But, why mathematicians worry so much about rigour? To understand answers of this question one need to understand, what is called “Analysis” in mathematics?

A standard definition of Analysis is (as in [R]):

Analysis is the systematic study of real and complex-valued continuous functions.

The above definition tells us what we will achieve by application of our understanding of Analysis, but this doesn’t explains what “Analysis” itself is.

Clearly, analysis has its roots in calculus. Newton and Leibniz defined differentiation and integration without bothering about definition of limit. Euler found correct value of limit of various infinite series by implicitly assuming “Algebra of infinite series”, which doesn’t exist! I myself used the commutativity of addition of real numbers for the terms in infinite series by assuming “Algebra of infinite series”!! Great mathematicians like Euler, Laplace etc. who even solved differential equations never bothered to think about foundations of calculus because they studied only real variable functions arising from physical problems and series which are power series.

Though without bothering about foundations, we could easily (intuitively) arrive at correct answers due to deep insights (of great mathematicians) but it became extremely difficult to teach such “deep insight” based mathematics to students. Without sense of rigour it became difficult to prove our claims for general cases (like the difference between point-wise continuity and uniform continuity).

This lead to a belief that:

Calculus (and thus Mathematics) is as good as theory of ghosts i.e. without any basis.

Also it became impossible for mathematicians to apply techniques of calculus beyond physical situations i.e. generalization of concepts was not possible.

To get rid of such allegations, Lagrange suggested that the only way to make calculus rigorous is to reduce it to Algebra (since algebra has inherent power of generalization). To illustrate this he defines derivative of a real function,  as coefficient of the linear term in

as coefficient of the linear term in  in Taylor series expansion for

in Taylor series expansion for  . Again this was wrong without consideration of limits and convergence, since there is no “Algebra of infinite series”!!! But this idea of using Algebra to make calculus rigorous was successfully realized by Cauchy, he used “Algebra of Inequalities” (but he also implicitly assumed the completeness property of real numbers) by introducing

. Again this was wrong without consideration of limits and convergence, since there is no “Algebra of infinite series”!!! But this idea of using Algebra to make calculus rigorous was successfully realized by Cauchy, he used “Algebra of Inequalities” (but he also implicitly assumed the completeness property of real numbers) by introducing  and

and  (though not explicitly, but in words).

(though not explicitly, but in words).

How “Algebra of Inequalities” became technique to create “rigorous calculus”, which we know as “Analysis” ? One main part of calculus was “Approximations”, i.e. to compute an upper bound on the error in the approximation — that is, the difference between the sum of the series and the  partial sum. Thus the “Tool of Approximation” was transformed to “tool of rigour”.

partial sum. Thus the “Tool of Approximation” was transformed to “tool of rigour”.

Initially, integral was thought as inverse of differential. But sometimes the inverse could not be computed exactly, so Euler remarked that the integral could be approximated as closely as one liked by a sum (also the geometric picture of an area being approximated by rectangles). Again, we got better definition of integral by work done by various mathematicians to approximate the values of definite integrals. Poisson, was interested in complex integration and was concerned about behaviour and existence of integrals. He stated and proved “The fundamental proposition of the theory of definite integrals”. He proved it by using an inequality-result: the Taylor series with remainder. This was the first attempt to prove the equivalence of the antiderivative and limit-of-sums conceptions of the integral. But, Poission implicitly assumes the existence of antiderivatives and bounded first derivatives for  on the given interval, thus the proof assumes that the subintervals on which the sum is taken are all equal. Again, Cauchy added rigour to Poisson’s proof.

on the given interval, thus the proof assumes that the subintervals on which the sum is taken are all equal. Again, Cauchy added rigour to Poisson’s proof.

Since most algebraic formulas hold only under certain conditions, and for certain values of the quantities they contain, one could not assume that what worked for finite expressions automatically worked for infinite ones. Also, just because there was an operation called “taking a derivative” did not mean that the inverse of that operation always produced a result. The existence of the definite integral had to be proved. Borrowing from Lagrange the mean value theorem for integrals, Cauchy finally proved the “Fundamental Theorem of Calculus”.

Thus, algebraic approximations produced the algebra of inequalities. The application of Algebra of inequalities lead to concept of Approximations in Calculus. The concept of approximations in calculus in turn lead to 3 key concepts : “error bounds for series” (d’Alembert), “inequalities about derivatives” (Lagrange) and “approximations to integrals” (Euler). I believe that, these three concepts combined with rigour lead to what we call “Analysis” in Mathematics.

The subject of analysis itself consists of 4 main flavours:

- Real Analysis

- Complex Analysis

- Functional Analysis

- Harmonic Analysis

with the generalization of basic tools in terms of measure theory (leading to generalization of integration) and calculus of several variables. For example, the differentiation of a several variable function  where

where  leads to a linear transformation from

leads to a linear transformation from  to

to  (or equivalently, an

(or equivalently, an  matrix with real values entries) instead of a real number with norm of limiting value in denominator. Also, we can generalize the concept of Taylor series for several variable functions using the notion of “partial derivatives” as

matrix with real values entries) instead of a real number with norm of limiting value in denominator. Also, we can generalize the concept of Taylor series for several variable functions using the notion of “partial derivatives” as

Using the “change of variable theorem” we can evaluate integrals of several variable functions over a “cell” by evaluating multiple integrals. Finally, using the concept of “differential forms”originating from geometry, we can prove Stokes’ theorem, of which “fundamental theorem of calculus” turns out to be a special case (among many other important theorems like Green’s theorem and Divergence theorem).

References:

[G] J V Grabiner, “Who Gave You the Epsilon? Cauchy and the Origins of Rigorous Calculus”, American Mathematical Monthly 90 (1983), 185–194

[R] John Renze and Eric W. Weisstein, “Analysis.” From MathWorld–A Wolfram Web Resource. http://mathworld.wolfram.com/Analysis.html

[S] Ian Stewart, “analysis | mathematics”. Encyclopedia Britannica.

http://www.britannica.com/topic/analysis-mathematics

[X] Mathematical analysis. Encyclopedia of Mathematics. URL: http://www.encyclopediaofmath.org/index.php?title=Mathematical_analysis&oldid=31489

[SM] Maurice Sion, History of measure theory in the twentieth century, www.math.ubc.ca/~marcus/Math507420/Math507420hist.pdf

[H] Barbara Hubbard and John H. Hubbard, “Vector Calculus, Linear Algebra, and Differential Forms: A Unified Approach”, Prentice Hall .

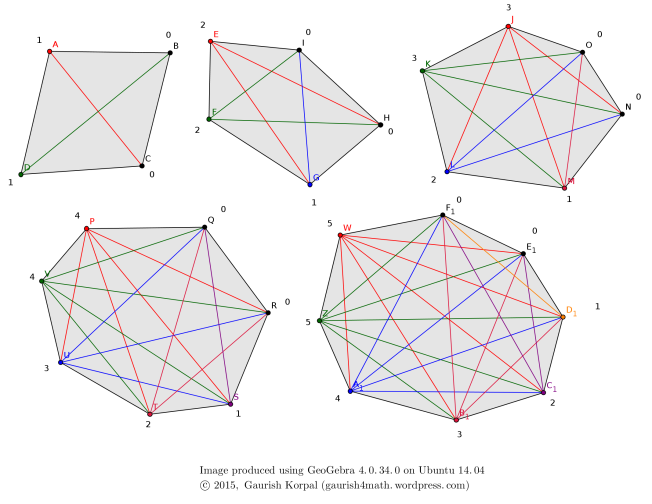

-sided polygon, and start drawing diagonals from each vertex one-by-one. While doing so count the number of new diagonals contributed by each vertex. Here is the “Experiment” done for

.

-sided polygon follows the sequence:

diagonals.

).

diagonals.

diagonals.

diagonals.

vertex, consider the restriction to contribution caused by

to

vertices. Thus for

we get the number of new diagonals contributed by

vertex equal to

.

vertex we end up with zero (new) contribution,

vertex will also contribute zero diagonals.

You must be logged in to post a comment.