About 2.5 years ago I had promised Joseph Nebus that I will write about the interplay between Bernoulli numbers and Riemann zeta function. In this post I will discuss a problem about finite harmonic sums which will illustrate the interplay.

Consider the Problem 1.37 from The Math Problems Notebook:

Let

be a set of natural numbers such that

, and

are not prime numbers. Show that

Since each is a composite number, we have

for some, not necessarily distinct, primes

and

. Next,

implies that

. Therefore we have:

Though it’s easy to show that , we desire to find the exact value of this sum. This is where it’s convinient to recognize that

. Since we know what are Bernoulli numbers, we can use the following formula for Riemann zeta-function:

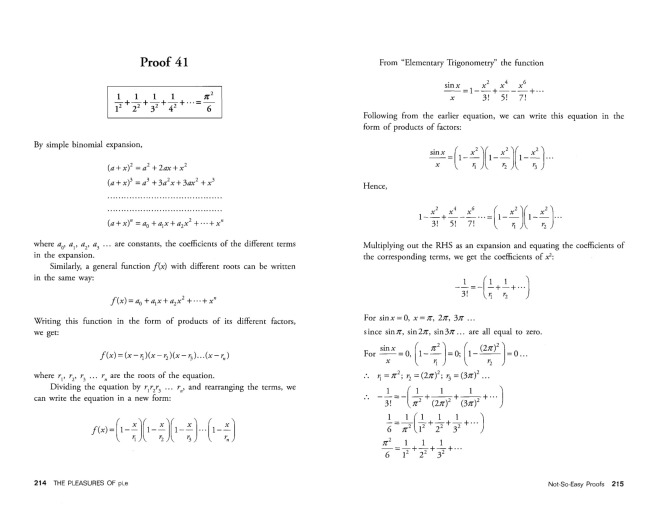

There are many ways of proving this formula, but none of them is elementary.

Recall that , so for

we have

. Hence completing the proof

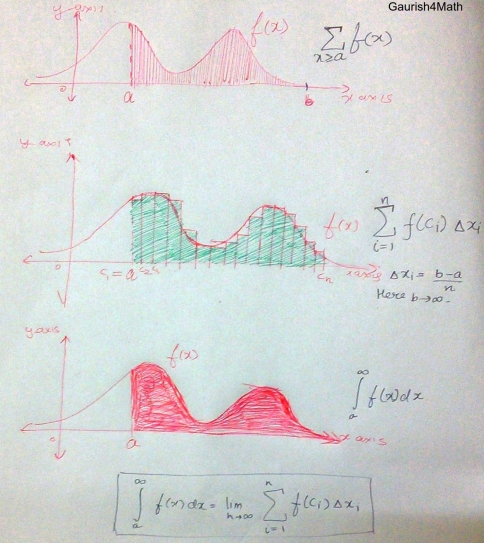

Remark: One can directly caculate the value of as done by Euler while solving the Basel problem (though at that time the notion of convergence itself was not well defined):

The Pleasures of Pi, E and Other Interesting Numbers by Y E O Adrian [Copyright © 2006 by World Scientific Publishing Co. Pte. Ltd.]

You must be logged in to post a comment.