Following are some of the terms used in topology which have similar definition or literal English meanings:

- Convex set: A subset

of

is called convex1 , if it contains, along with any pair of its points

, also the entire line segement joining the points.

- Star-convex set: A subset

of

is called star-convex if there exists a point

such that for each

, the line segment joining

to

lies in

.

- Simply connected: A topological space

is called simply connected if it is path-connected2 and any loop in

defined by

can be contracted3 to a point.

- Deformation retract: Let

be a subspace of

. We say is a

deformation retracts to

if there exists a retraction4

a retraction such that its composition with the inclusion is homotopic5 to the identity map on

.

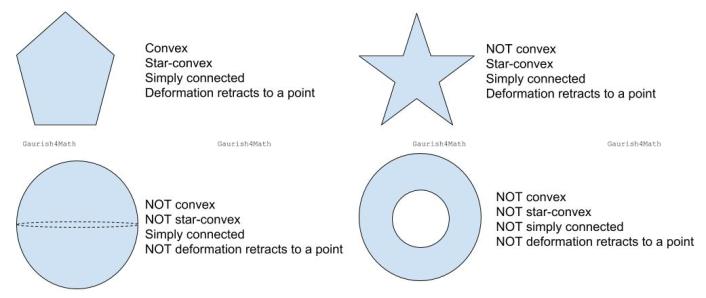

Various examples to illustrate the interdependence of these terms. Shown here are pentagon, star, sphere, and annulus.

A stronger version of Jordan Curve Theorem, known as Jordan–Schoenflies theorem, implies that the interior of a simple polygon is always a simply-connected subset of the Euclidean plane. This statement becomes false in higher dimensions.

The n-dimensional sphere is simply connected if and only if

. Every star-convex subset of

is simply connected. A torus, the (elliptic) cylinder, the Möbius strip, the projective plane and the Klein bottle are NOT simply connected.

The boundary of the n-dimensional ball , that is, the

-sphere, is not a retract of the ball. Using this we can prove the Brouwer fixed-point theorem. However,

deformation retracts to a sphere

. Hence, though the sphere shown above doesn’t deformation retract to a point, it is a deformation retraction of

.

Footnotes

- In general, a convex set is defined for vector spaces. It’s the set of elements from the vector space such that all the points on the straight line line between any two points of the set are also contained in the set. If

and

are points in the vector space, the points on the straight line between

and

are given by

for all

from 0 to 1.

- A path from a point

to a point

in a topological space

is a continuous function

from the unit interval

to

with

and

. The space

is said to be path-connected if there is a path joining any two points in

.

- There exists a continuous map

such that

restricted to

is

. Here,

and

denotes the unit circle and closed unit disk in the Euclidean plane respectively. In general, a space

is contractible if it has the homotopy-type of a point. Intuitively, two spaces

and

are homotopy equivalent if they can be transformed into one another by bending, shrinking and expanding operations.

- Then a continuous map

is a retraction if the restriction of

to

is the identity map on

.

- A homotopy between two continuous functions

and

from a topological space

to a topological space

is defined to be a continuous function

such that, if

then

and

. Deformation retraction is a special type of homotopy equivalence, i.e. a deformation retraction is a mapping which captures the idea of continuously shrinking a space into a subspace.

You must be logged in to post a comment.